jogramop-framework

A framework for joint grasp and motion planning in confined spaces

This is a work by Martin Rudorfer, Jiri Hartvich, Vojtech Vonasek (Aston University, Birmingham, UK & Czech Technical University, Prague, CZ) and was presented at the 13th International Workshop on Robot Motion and Control - RoMoCo’24.

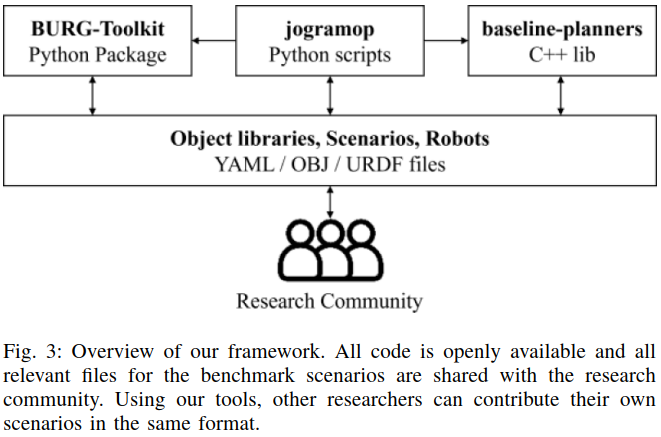

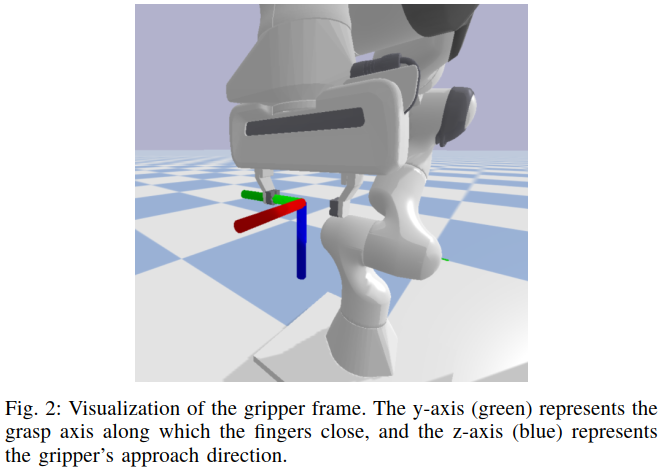

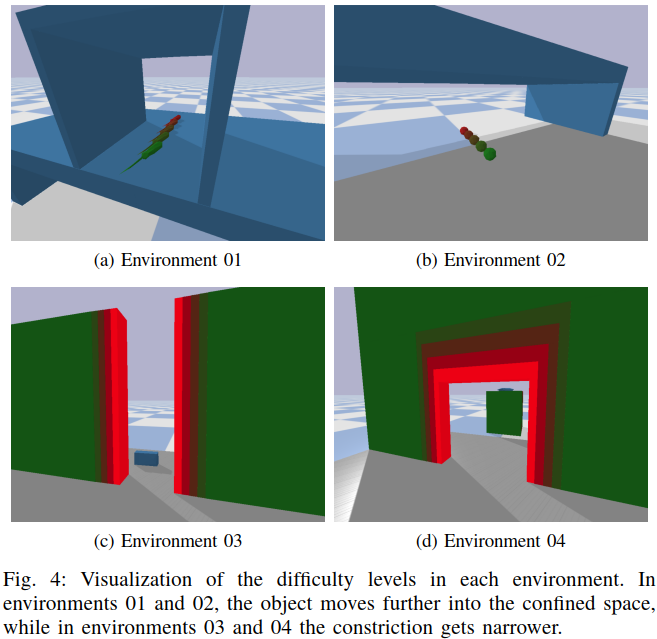

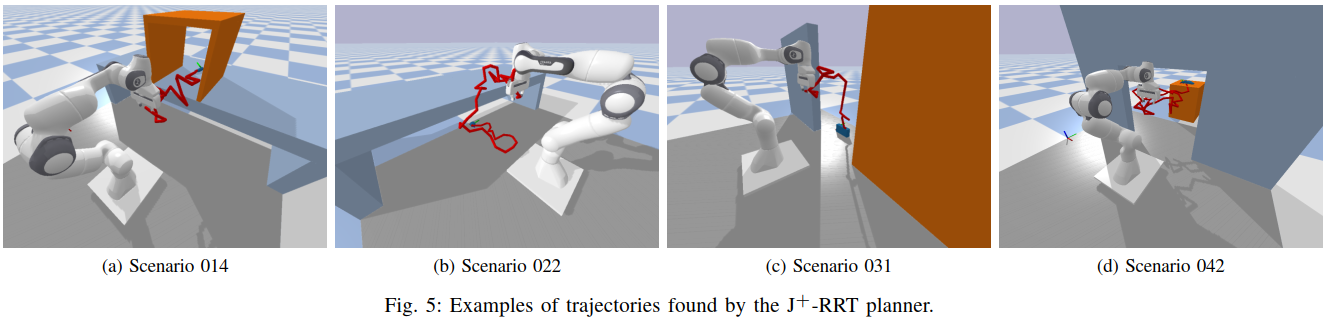

Abstract: Robotic grasping is a fundamental skill across all domains of robot applications. There is a large body of research for grasping objects in table-top scenarios, where finding suitable grasps is the main challenge. In this work, we are interested in scenarios where the objects are in confined spaces and hence particularly difficult to reach. Planning how the robot approaches the object becomes a major part of the challenge, giving rise to methods for joint grasp and motion planning. The framework proposed in this paper provides 20 benchmark scenarios with systematically increasing diffi- culty, realistic objects with precomputed grasp annotations, and tools to create and share more scenarios. We further provide two baseline planners and evaluate them on the scenarios, demonstrating that the proposed difficulty levels indeed offer a meaningful progression. We invite the research community to build upon this framework by making all components publicly available as open source.

The components can be found in the following repositories:

- github.com/mrudorfer/jogramop-framework - benchmark scenarios & scripts for scenario creation, visualization, and evaluation (Python)

- github.com/Hartvi/jogramop-planners - the baseline planners (C++)

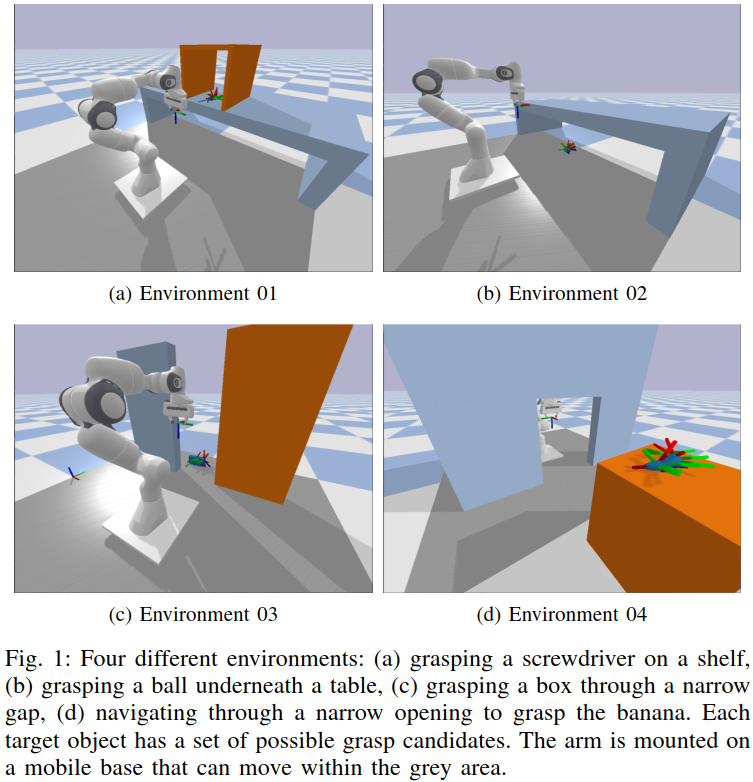

You can find more details in the respective repositories. Here are some pictures outlining the key features of the work: